12:16:39,472 - segment_wiki - INFO - processed #2000000 articles (at 'Dark Is the Night for All' now) 12:15:17,821 - segment_wiki - INFO - processed #1900000 articles (at 'What Rhymes with Cars and Girls' now) 12:14:02,378 - segment_wiki - INFO - processed #1800000 articles (at 'Yannima Tommy Watson' now) 12:12:49,631 - segment_wiki - INFO - processed #1700000 articles (at 'Sri Lanka Army Medical Corps' now)

12:09:19,355 - segment_wiki - INFO - processed #1400000 articles (at '1914 Penn State Nittany Lions football team' now) 12:04:34,628 - segment_wiki - INFO - processed #1000000 articles (at 'National Institute of Technology Agartala' now) 12:03:19,111 - segment_wiki - INFO - processed #900000 articles (at 'Baya Rahouli' now) 12:00:43,973 - segment_wiki - INFO - processed #700000 articles (at 'Patti Deutsch' now) 11:59:26,795 - segment_wiki - INFO - processed #600000 articles (at 'Ann Jillian' now)

11:58:02,725 - segment_wiki - INFO - processed #500000 articles (at 'Stephen Crabb' now)

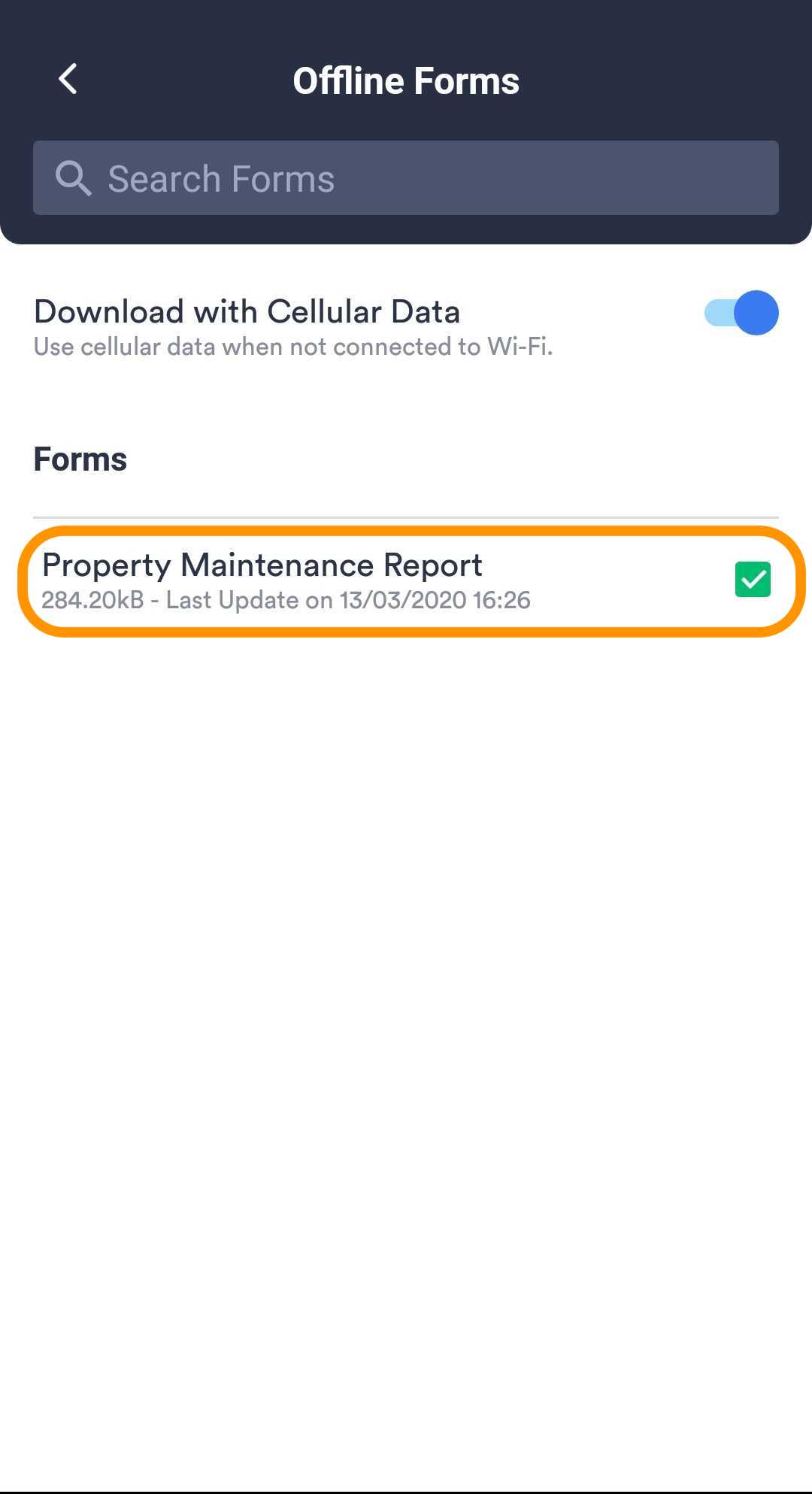

11:56:38,493 - segment_wiki - INFO - processed #400000 articles (at 'Georgia College & State University' now) 11:55:09,739 - segment_wiki - INFO - processed #300000 articles (at 'Yuncheng University' now) 11:53:26,704 - segment_wiki - INFO - processed #200000 articles (at 'Kiso Mountains' now) 11:51:34,063 - segment_wiki - INFO - processed #100000 articles (at 'Maquiladora' now) 11:49:36,808 - segment_wiki - INFO - running /home/mrus/.virtualenvs/local3.9/lib/python3.9/site-packages/gensim/scripts/segment_wiki.py -i -f enwiki-latest-pages-articles.xml -o enwiki-latest-pages-articles.json I didn’t bother toĬheck in-depth, but 5,792,326 out of 6,424,367 didn’t sound too bad at all. Less time in total, as a bit of the content was skipped. Wikipedia consists of 6,424,367 articles, meaning it should takeĪround two hours to convert all articles to JSON. I kept the defaultįor -w (the number of workers), which is 31. On my machine I got roughly 50,000 articles per minute. Going to use wget -c here, so that we can continue a partial download, just in Downloading Wikipediaįirst, let’s download the latest XML dump of the English Wikipedia. So today I thought it might be a great day to try a different approach. At some point, it just became too impractical to Pretty good, I ended up with a 250GB database back at the time, which Using uveira, my own command line tool for that. Than the actual dump itself, which at time of writing is 81GB in total sizeĪ year ago I tried this experiment once and used a tool calledĭumpster-dive to load the Wikipedia dump into a MongoDB and access it Workflow – which is terminal-based – and doesn’t end up eating more storage I was looking for a more lightweight approach that integrated well into my Have some pretty terrible prerequisites, like for example Java. Or through other means, but they’re all quite cumbersome to use and in parts That try to offer an offline version of Wikipedia, either based on these dumps Ready-to-use apps (like Kiwix, Minipedia, XOWA, and many more) Unfortunately, these database dumps aren’tĮxactly browsable the way they’re offered by Wikipedia. Unfortunately crawling Wikipedia’s HTML for offline use is not something that’sįeasible - and it’s not even necessary as Wikipedia offers database dumpsįor everyone to download for free. Some documentation on real life, for which I use Wikipedia most of the time. However, I oftentimes ended up in situations, in which I needed to look up Machine, so that I wasn’t dependent on connectivity to be able to work. Speeds and stable connections (<3 Seoul) or, well, not so much so.īack at the time I took care to have documentation stored offline on my Depending on where in the world I was, I either had superb internet Working stationary usually don’t experience. Travelled quite a lot and hence had to deal with all sorts of things that people As some of you might know, up until the global situation went sideways I

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed